- Sphere Engine overview

- Compilers

- Overview

- API

- Widgets

- Resources

- Problems

- Overview

- API

- Widgets

- Handbook

- Resources

- Containers

- Overview

- Glossary

- API

- Workspaces

- Handbook

- Resources

- RESOURCES

- Programming languages

- Modules comparison

- Webhooks

- Infrastructure management

- API changelog

- FAQ

Submissions are always executed in the context of a programming problem. The Sphere Engine service is responsible for submission correctness verification as a solution to a given problem.

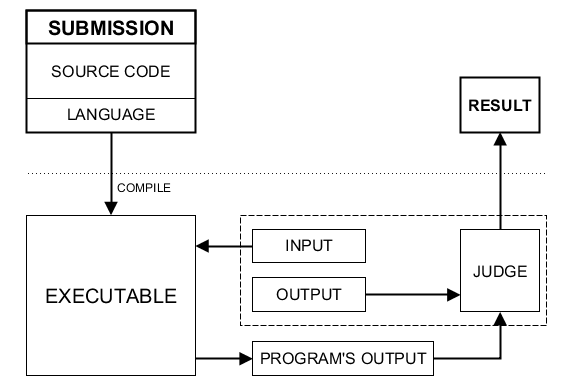

Correctness verification is carried out through submission execution. The program is executed using input data that is a part of the programming problem. The execution of the program generates, among other outputs, output data. As a final step, the so-called judge (a program that is part of the programming problem) compares the generated output data with the reference output data (also part of the problem). The effect of the comparison is a verdict determining whether the submission correctly solves the programming problem.

The diagram below illustrates the above scheme of how the system works (click to enlarge):

Test cases

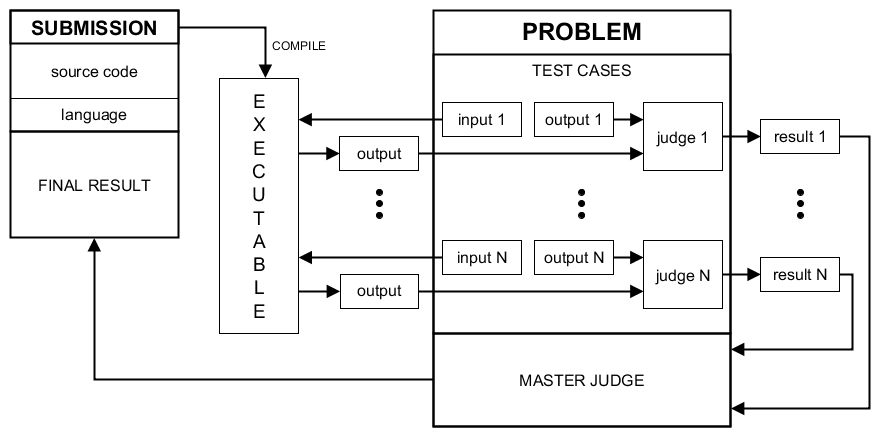

The description in the previous section has been simplified. We assumed that the programming problem contains only one test set. In reality, it is possible to establish many so-called test cases.

The single test case consists of:

- input data,

- reference output data,

- test case judge (i.e., a program that compares the output data produced during, the submission execution with the reference output data),

- execution time limit.

The user's submission is executed independently for each test case. Consecutive executions are performed in a sequential manner.

The result of executing a submission on a single test case is the verdict, which determines whether the submission solves the test case correctly. Additionally, in the case of problems involving optimization, a score is also determined.

The final verdict

After obtaining the verdicts (possibly with the associated scores), it is possible to determine the final verdict for all test cases (potentially with the final score).

The component responsible for determining the final verdict is the so-called master judge. It is a program that is part of a programming problem that determines the final results based on the results obtained during individual test case executions.

The diagram below faithfully reflects the processing of the submission in the system:

Test case applications

Usually, a submission is neither completely correct nor completely incorrect. The test case mechanism quantifies the degree of solution correctness. A good practice is to identify various aspects of a solution's correctness and to test them independently in separate test cases.

Examples of solution features that can be verified:

- sample data correctness, given in the problem description,

- edge cases correctness,

- correctness for various data ranges,

- the time complexity of the algorithm used (see: guide),

- memory complexity of the algorithm used (see: guide).

Note: The mechanism of test cases was not designed to repeat a large number of analogous tests. Numerous analogous tests can be optimally implemented as part of a single test case. For more information, see the Good test cases design section in the Related topics chapter.